Rather than jumping straight into Figma, I focused on understanding the underlying problem space. This meant studying why existing AI patterns exist, what risks they address, and where they fail.

This wasn’t aesthetic design, it was systems design grounded in risk analysis and industry research.

1

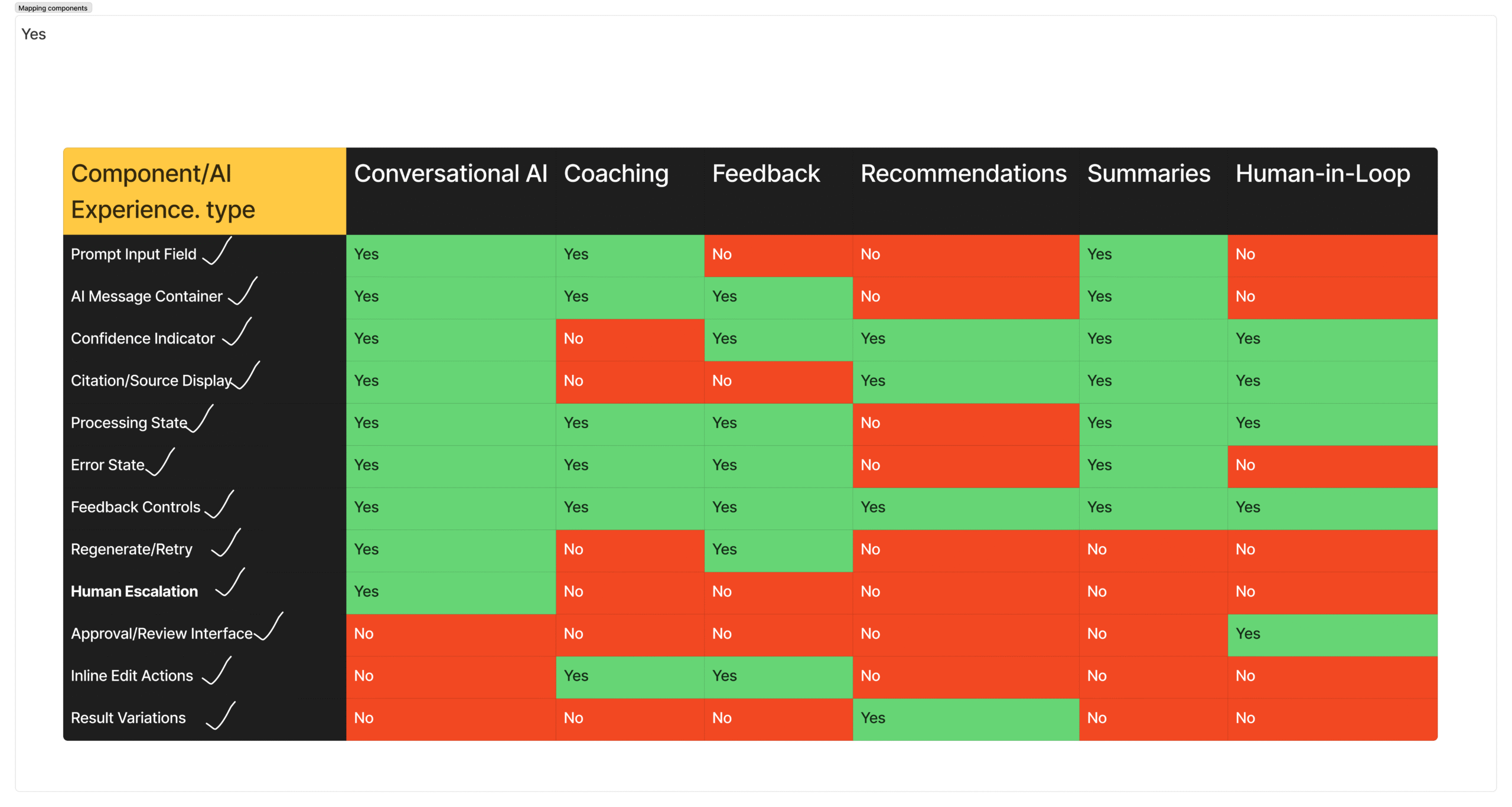

Experience Type Taxonomy

I defined six core AI experience types: Conversational AI, Recommendations, Coaching & Guidance, Human-in-the-Loop, Feedback & Review, and Summaries & Insights.

Key insight: Each experience type carries fundamentally different risks. Conversational AI raises trust and overreliance concerns. Recommendations surface bias and assumptions. Human-in-the-loop flows risk approval fatigue and disengagement. This classification created structure for designing context-aware solutions.

2

Risk Mapping Framework

Using Google PAIR’s AI risk framework, I mapped failure modes to each experience type. I identified five primary risk categories: Trust & Overconfidence, Explainability Gaps, Bias & Assumptions, Loss of Agency, and Approval Fatigue.

Why this mattered: This became the foundation for decision-making. Every design choice and component was tied to a specific, documented risk.

3

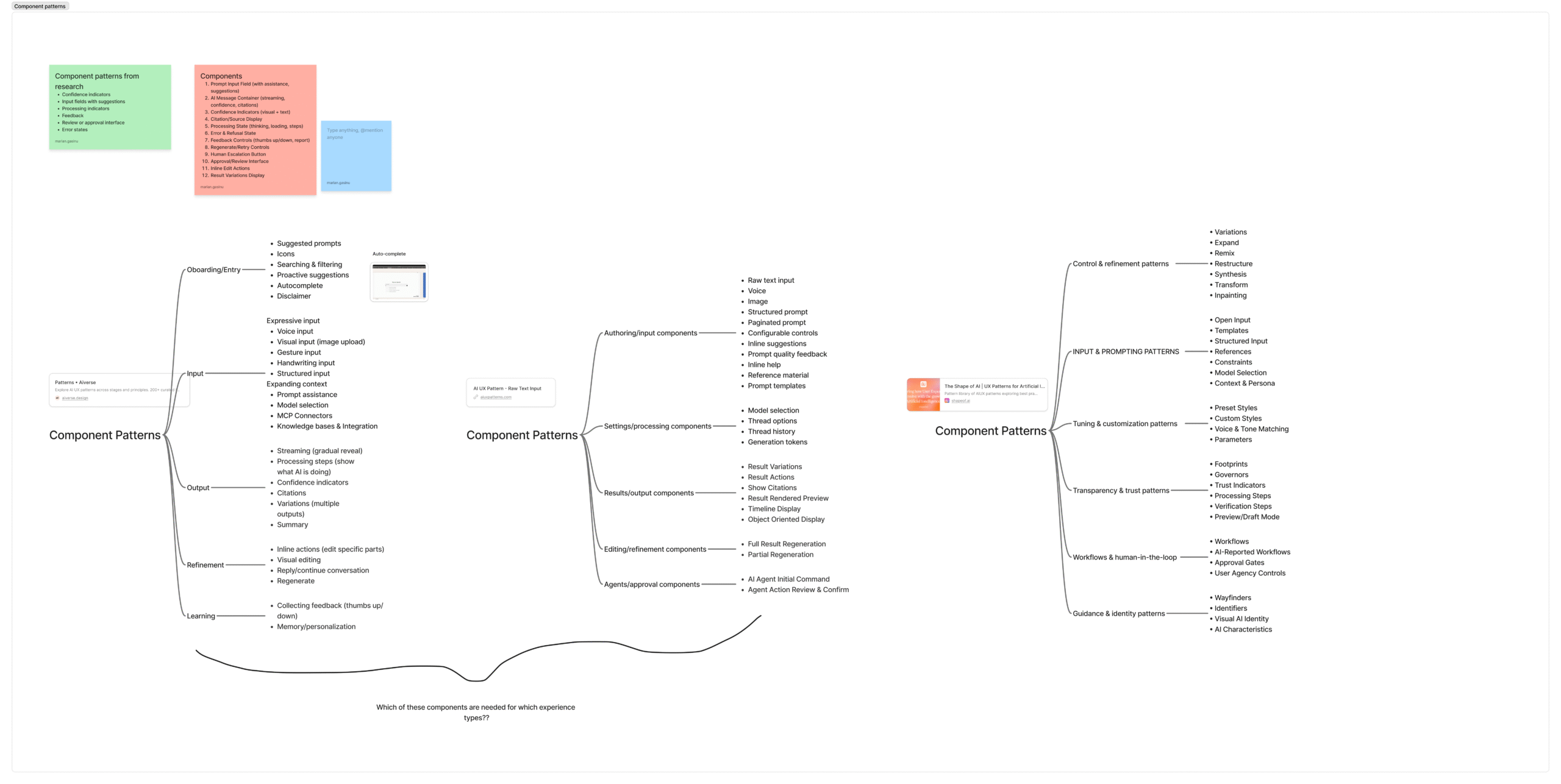

Competitive Pattern Analysis

I analyzed 15+ leading AI products including ChatGPT, Claude, GitHub Copilot, Figma AI, and Canva AI, alongside three industry pattern libraries.

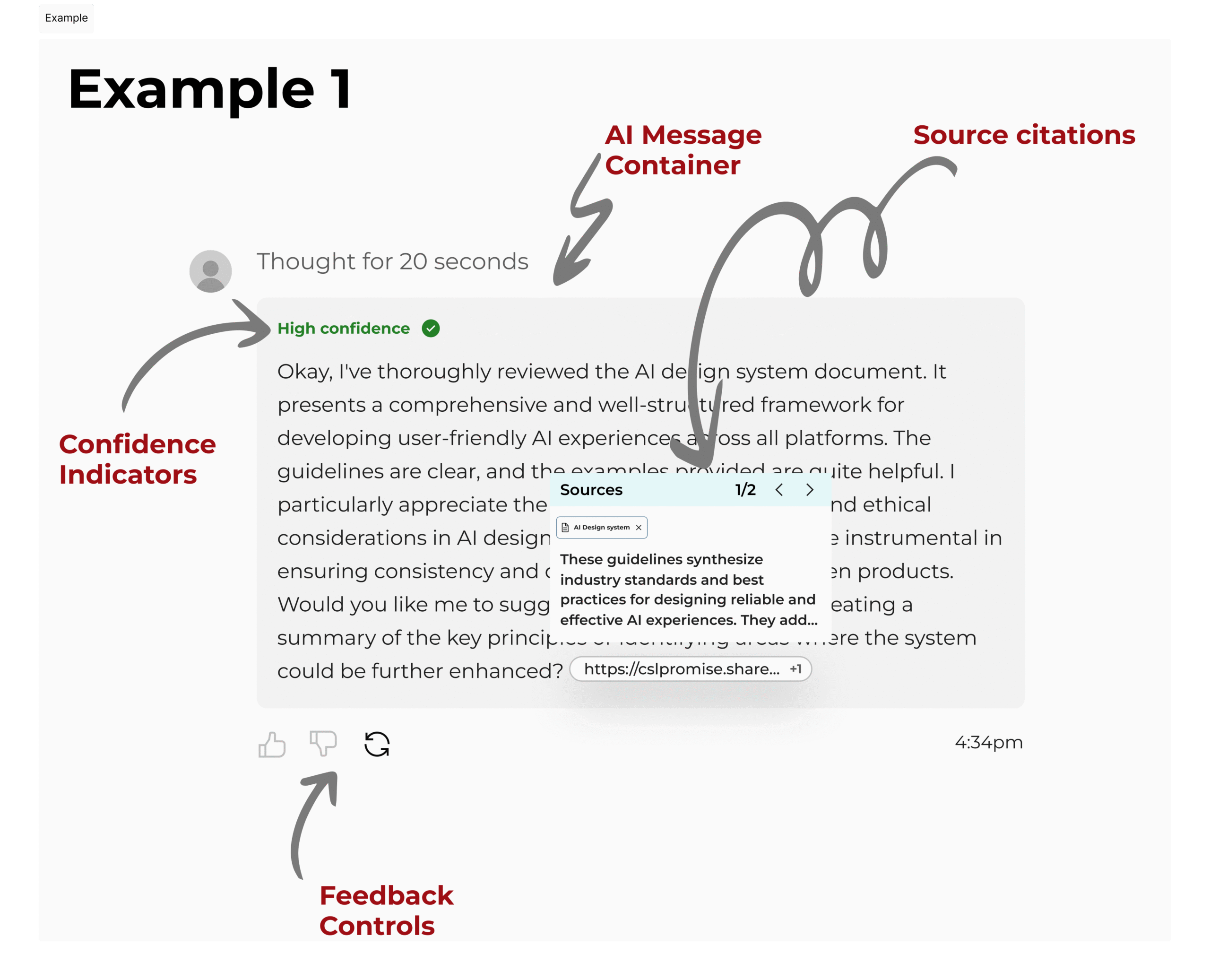

Key finding: There is strong consistency across successful AI products. Confidence indicators appeared across multiple tools. Source citations were nearly universal. Feedback mechanisms were standard. These patterns are not optional or random, they are the baseline for building trust in AI systems.

4

Strategic Component Selection

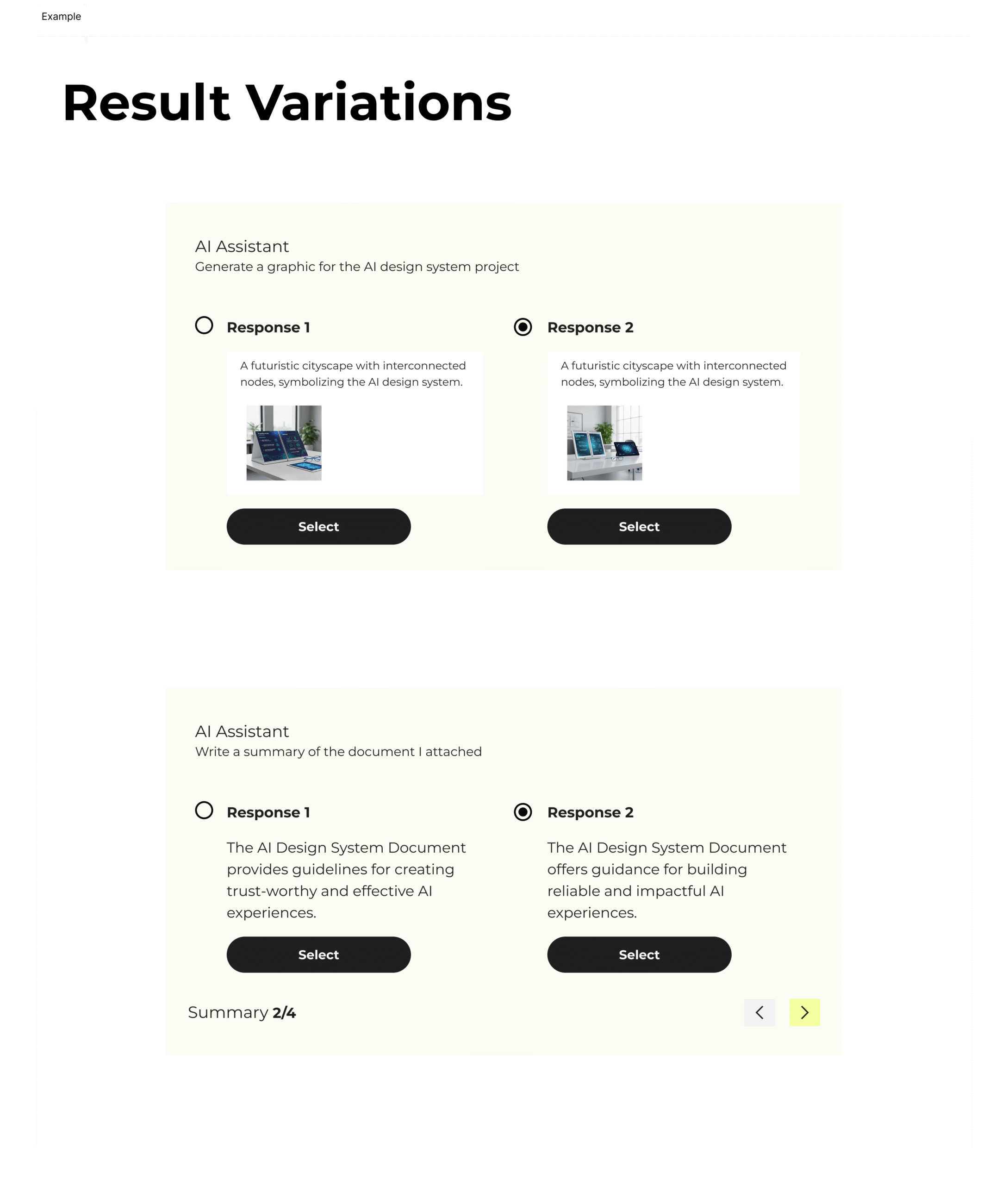

From this research, I extracted 12 reusable components and grouped them into four functional categories: Trust & Transparency, User Control & Agency, Input & Output, and Error Handling.

Each component had to meet three criteria: address a mapped AI risk, appear across multiple real-world products, and apply across multiple experience types. This ensured the system was both grounded and scalable.

5

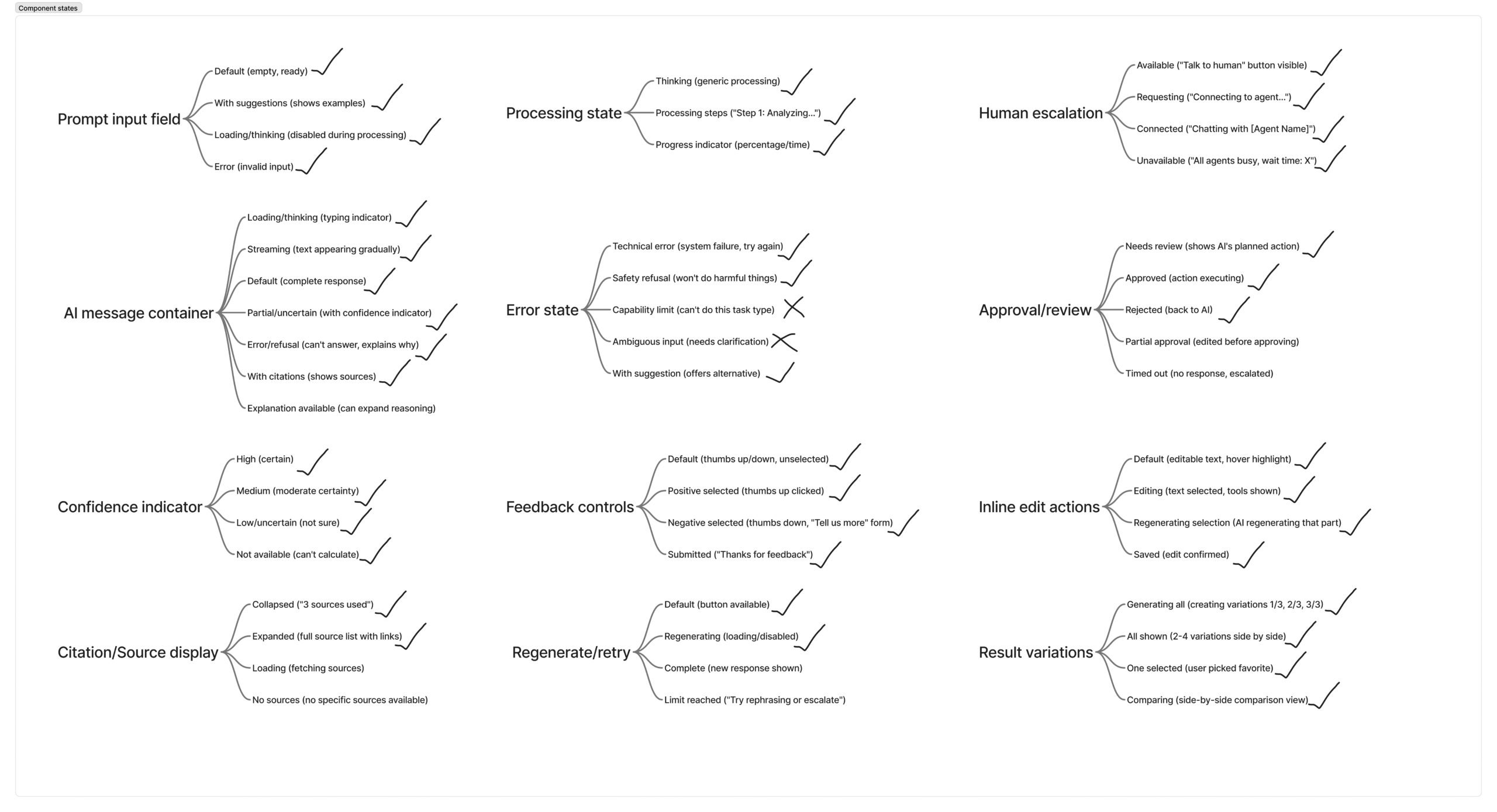

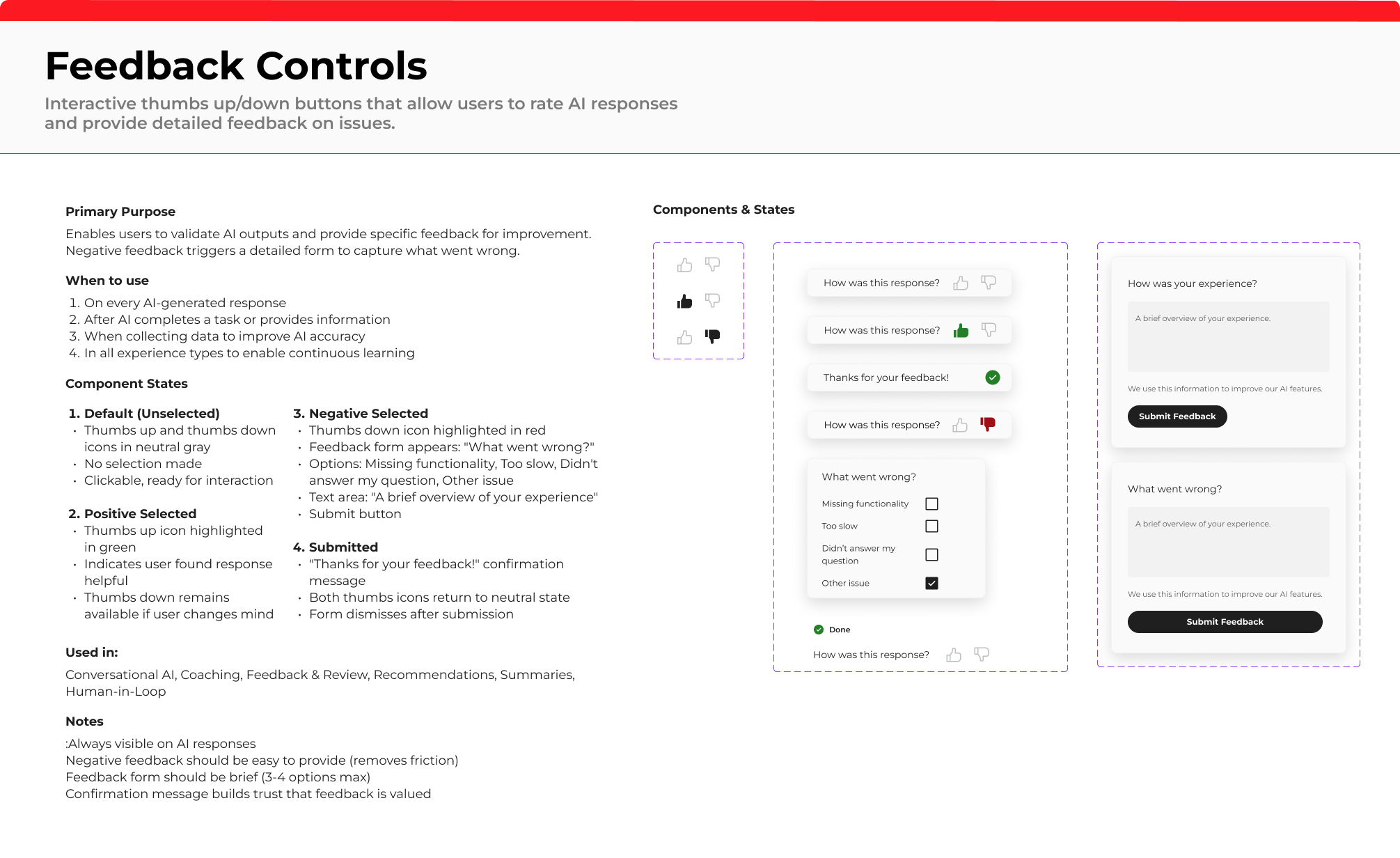

Multi-State Design System

AI systems are probabilistic and inherently uncertain. Designing only for ideal outcomes would ignore how these systems actually behave.

Each component was designed with multiple states to reflect uncertainty, partial outputs, errors and recovery, and human escalation.

Shift in approach: Traditional UX focuses on a single happy path. AI UX requires designing for variability, failure, and continuous state transitions.

Risk-Driven Design Philosophy

Every component in this system is intentionally designed to address a specific, documented AI failure mode.

Confidence indicators address the “trust paradox,” where users either over-trust confident AI responses or dismiss them entirely. Source citations respond to the reality of AI hallucinations, giving users a way to verify outputs. Human escalation pathways acknowledge the limits of AI and ensure users have a clear path to regain control when needed.

This approach grounds design decisions in risk, not intuition, ensuring that every pattern serves a functional purpose in building safe, trustworthy AI experiences.